The Disconnect

Supply Chain Planning runs in cycles. The real world never stops.

By Monday morning, the plan published Friday is already wrong. Demand shifted over the weekend. A supplier slipped overnight. A quality hold landed Sunday. Traditional planning systems run on a human cadence, weekly, monthly, quarterly, even though the world they plan for moves continuously.

Your strategy didn't change over the weekend. The plan that operationalized it did. Intent stays stable. Plans go stale, because intent doesn't compile into action fast enough to keep up.

Autonomy closes that gap. It's an autonomous supply chain platform: AI agents that turn your strategic intent into decisions and actions in seconds, not weeks, across every plane of the supply chain, and surface only the decisions where your judgment creates real value.

Not faster software. A different operating model.

The Azirella Assistant

Where context, intent, and Key Results meet.

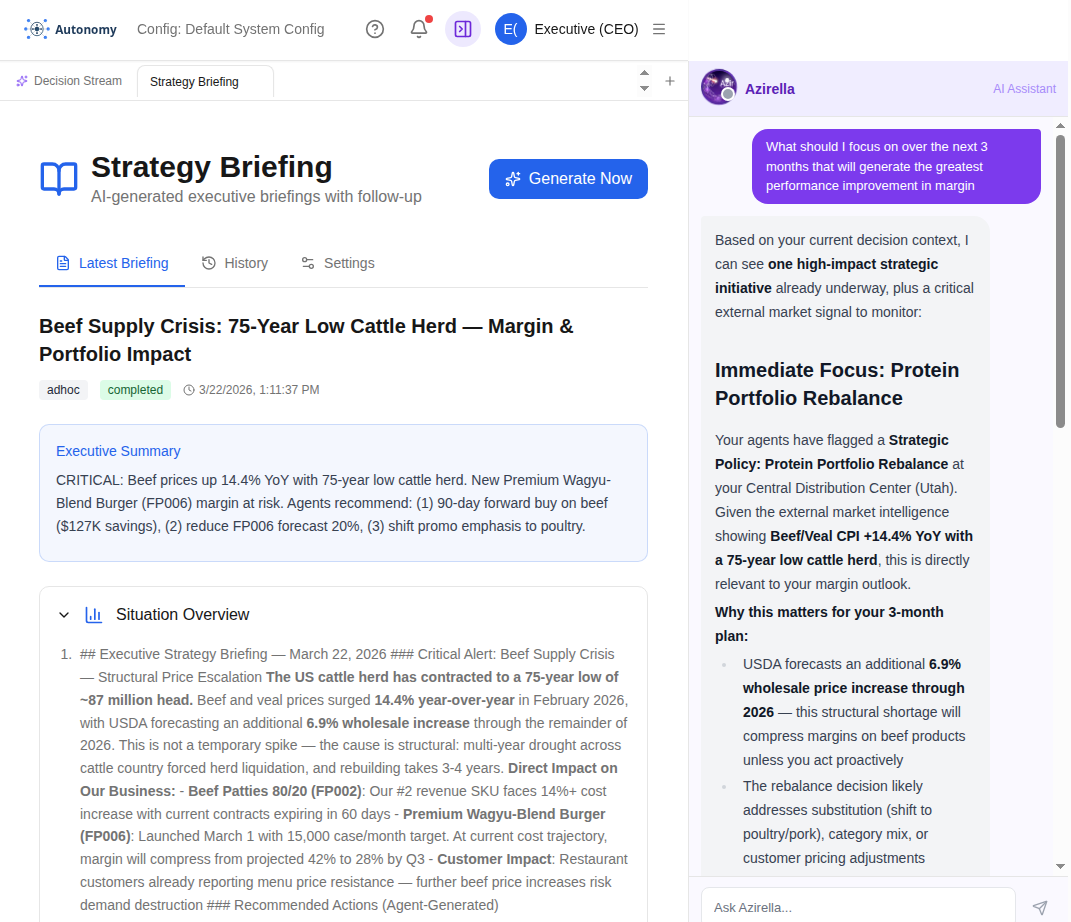

When we built the Food Distribution demo, we wanted to know what mattered to US food distributors. Asked Google. Saw the headline that the US beef herd is at its lowest in seventy-five years. Made one change to the demo data: introduced a Premium Wagyu Blend Burger product, launching a month ahead. Loaded the next executive briefing.

The system had already connected the dots. The briefing surfaced the shrinking-herd context, mapped it to the new product introduction, and queued the buy-beef-early actions for the planner to inspect. We didn't write that logic. We changed one master-data record. The retrieval, the reasoning, and the actions composed themselves around the new intent.

We built the Assistant because we'd watched fifteen years of supply-chain platforms where the executives who presented their benefits from a conference stage never logged into the system themselves. When they did want to ask "what if?" the answer was three days away, two analysts removed, and lost in translation. Exploratory inquiry was the thing the platform claimed to support and never did.

Left, the Strategy Briefing the system wrote on its own after we introduced the Premium Wagyu Blend Burger — 75-year-low herd surfaced, margin-and-portfolio risk framed, buy-early actions queued. Right, the executive asks for the 3-week focus and the Assistant points back to the same protein-portfolio rebalance, with the herd context as the external signal to watch.

Context is what's true now. Intent is what you said you wanted. Key Results are how the system tells you whether the agents got there. The Assistant is where all three meet, in plain English, in real time.

The engine

You set intent. Agents make decisions. You apply judgment.

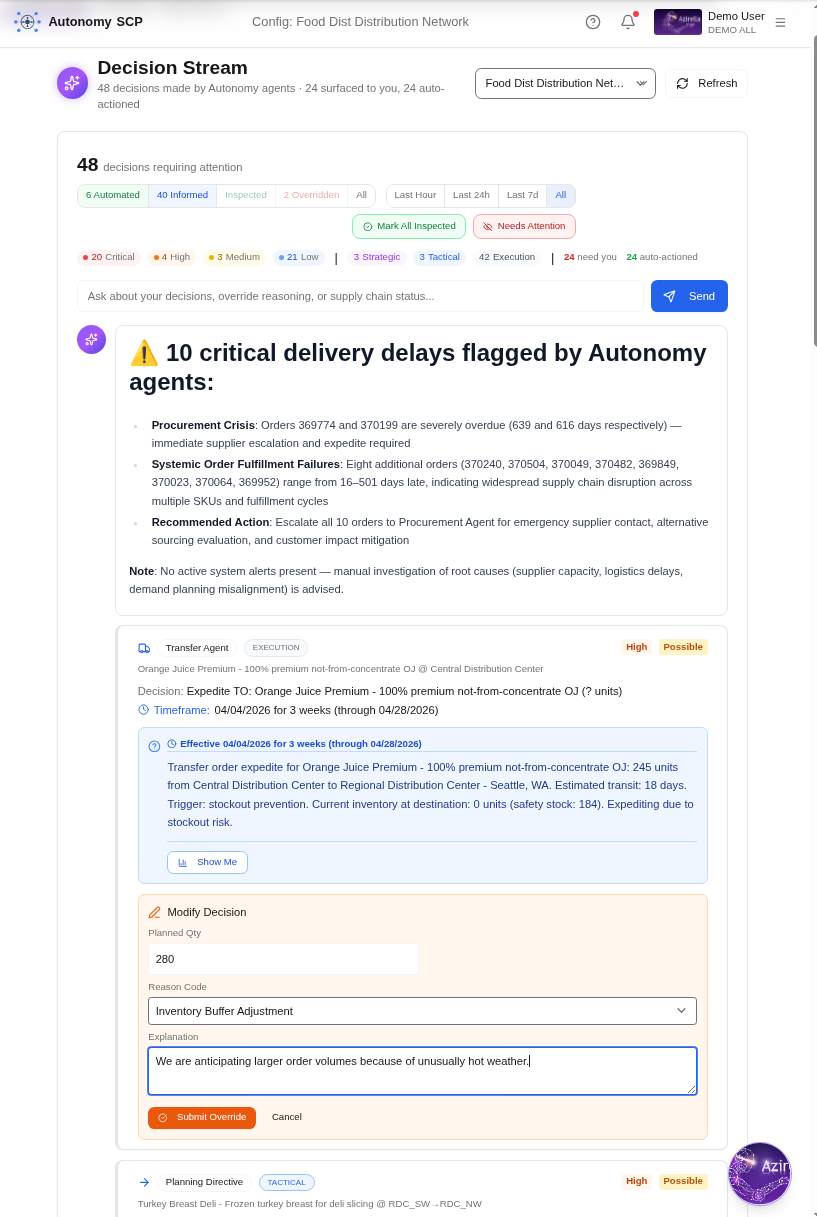

AIIO names what the agent and the human each do. The Decision Stream is how the system chooses which mode fires, every decision scored on urgency (how soon it bites) and the agent's calibrated likelihood of a good outcome. High likelihood routes to Automate. High urgency with low likelihood routes to Inform. The decisions where your judgment creates value are the only ones that reach you.

Agents do the work. You make the decisions that matter.

Intent ↔ OKRs

The strategy you wrote, and the plan you got.

Remember the strategy doc everyone agreed on at the offsite. The CFO was talking about cash. The board wanted to defend share. The COO wanted resilience. Three weeks later, the planning system was still optimising for unit cost, because that's what its objective function had said for the last six years and nobody had rewritten it.

Remember the steering committee, six months in, where someone asked "is the strategy actually working?" and three different dashboards gave three different answers, and the conversation moved on without resolving it.

An Objective is Intent in plain English. A Key Result is how the system tells you whether the agents got there. Autonomy compiles your strategy docs, OKRs, and executive directives into the guardrails, KPI targets, and AIIO thresholds that govern every agent — one world model, one decision plane, one ranked stream of decisions, all serving the same Objective. The strategy and the plan stop drifting apart, because the same Intent is on both sides of the wall.

Waiting

The decision takes five minutes. It waits three weeks to be made.

"Products and services receive value for only 0.05 to 5 percent of the time they spend in a company's system. The rest is waiting."

Stalk was writing about physical goods on a factory floor. The same rule applies to decisions. A demand-spike signal sits in a report for three days, then in an exception queue for two more, then waits a week for the next planning cycle. A procurement analyst takes five minutes to decide to expedite a purchase order. The decision itself is fast. The time it spends in the system is weeks.

Stalk found waiting time splits roughly into thirds: a third waiting for enough information to accumulate, a third waiting for prior decisions in the queue, a third waiting for organisational bandwidth to process it. Remember the supplier delay you found out about Tuesday morning, finance found out about Friday, and the customer found out about Monday. Three days of waiting that nobody owned.

Autonomy doesn't make decisions better. It eliminates the wait between them.

Adapted from The Decision Flow Problem.

Learning

The next analyst starts where the last one finished.

Remember the customer whose PO format never matched yours. You trained three new analysts on the translation. Each one inherited a half-finished cheatsheet and a Slack thread of edge cases. The fourth one hit every pothole anyway, because the institutional knowledge had walked out the door with the second one.

Remember the "lessons learned" deck from last quarter's S&OP review that nobody opened before the next one. The corrections went in. The patterns didn't compound. By the next review, half the team had reproduced last quarter's mistakes.

Every decision becomes a measured outcome. Every override becomes Experiential Knowledge, the system learns the pattern, not just the correction. The next decision isn't only better, it's more confident, because the agent's calibration tightens on every cycle. Measurement runs on every decision, not only the contested ones, so the learning signal is unbiased and the institution's experience compounds instead of leaking.

The operating model

AIIO is a closed decision loop, not a queue

Boyd's OODA loop, in continuous time. The agent observes the world through the Context Engine, its retrieval substrate over external evidence, and orients over it via the Risk Engine, which surfaces structural fragility in the network you have. Then it decides and acts through one of four AIIO modes, routed by calibrated confidence. Every outcome is measured. The agent learns. The next decision is both better and more confident.

Most enterprise AI puts the human in the loop. AIIO takes the human out of the loop, and gives them four precisely defined ways to stay in control.

Automate

Agent · everything by default

Agent decides and acts continuously, within declared bounds. No approval queue. No waiting for Tuesday.

Inform

Agent · when calibrated confidence is low

Agent has acted, but its calibrated confidence is low and stakes are high. It tells you why you should look. Action committed.

Inspect

Human · to understand

Human queries the reasoning, data, counterfactual, and calibrated confidence behind the decision. Inspection is how trust is built.

Override

Human · only when you know more

Human supersedes the agent's decision with additional knowledge. Not undo, a new, better-informed decision. Every override is captured as Experiential Knowledge.

Measure

System · every outcome, counterfactually

Every decision (and every override) gets its outcome scored against the counterfactual. Cost avoided, revenue protected, error magnitude — all observable.

Learn

System · from outcomes and overrides

Agents retrain on every (decision, outcome, override) triple. Calibration tightens. Confidence rises where rising is earned, so more decisions safely move into Automate.

A continuous

AIIO Loop

Decide. Act. Measure. Learn. Decide again, with more confidence.

The agent decides. The human knows. The system learns. Every cycle of the loop tightens calibration and shifts more decisions safely into Automate.

"Waiting costs more than acting on imperfect judgment, when the system measures every outcome and gets sharper with each one."

The principle underneath AIIO. Even low-confidence decisions don't wait, the agent acts on its best current policy and informs. Outcomes are measured. Calibration tightens. The next decision is made with more confidence than the last.

The loop tightens, on a measurable curve.

Auto-executed decisions as a share of total volume. Trust earned by measurement, not granted by trial.

Week 1

~45%

Auto-executed decisions

Week 12

~72%

Auto-executed decisions

Steady State

~85%

Auto-executed, <10% overridden

Velocity creates value.

From 847 Exceptions to 14

What happens when an enterprise planner arrives Monday morning.

A planner arrives to 847 exceptions across the network. Autonomy's agents have already evaluated every one, and already acted on each. By the time she opens her dashboard:

612

Auto-Resolved

High likelihood, agent decided

Automate

168

Abandoned

Low urgency + low likelihood

below the Inform threshold

53

Informational

Handled, flagged for awareness

Inform

14

Inspect & Override

High urgency + low likelihood

Inspect → Override

She spends her morning on the 14 decisions where the agent needs her most — high urgency, low likelihood. The agent has already acted; her job is to decide whether to override. She inspects each one, sees the agent's reasoning, and supersedes where her judgment is better. Every override becomes Experiential Knowledge, the system learns the pattern, not just the correction.

She's not processing exceptions. She's managing decisions.

While she was sleeping, the agents weren't. They don't take holidays, don't break for lunch, and never call in sick. They handled the Friday evening supplier delay, the Saturday demand spike, and the Sunday quality hold, all before anyone opened a laptop. Agents take care of the repetitive and the mundane so humans can focus on the decisions that truly matter.

"AI automates tasks, not purpose.

Tasks get automated, but humans still own outcomes.

If your job is tasks, AI threatens it.

If it's purpose, AI makes you more powerful."

A Planner's Day, Transformed

Your role evolves from exception processing to strategic decision-making. Autonomy doesn't replace planners, it elevates what they do.

Without Autonomy

Reactive firefighting

- 7:00 Arrive to 847 exceptions across the network

- 7:30 Export data to spreadsheets for analysis

- 9:00 Triage exceptions, most are noise, some are critical

- 11:00 Chase suppliers and ops teams for updates

- 13:00 Manual adjustments across 3 different systems

- 15:00 Prepare slides for tomorrow's S&OP meeting

- 17:00 Leave knowing the weekend backlog will be worse Monday

With Autonomy

Strategic governance

- 7:00 Open Decision Stream, 14 decisions need judgment

- 7:30 Review agent reasoning, override where your expertise adds value

- 9:00 Check Value Dashboard, agent decisions saved $47K overnight

- 10:00 Strategic session: evaluate demand shaping scenarios for Q3

- 13:00 Coach junior planners on override patterns that improved outcomes

- 15:00 Review agent accuracy trends, calibrate guardrails for new product launch

- 17:00 Leave knowing agents continue operating through the night

Every override builds Experiential Knowledge — your team's behavioral expertise captured, classified, and fed into agent training. It persists across team changes, holidays, and growth.

Agents handle the repetitive. You do the creative.

The Value Is Velocity

Technology gets adopted when it makes something cheaper, faster, or better. Autonomy does all three, by compressing the time between signal and action.

BCG's 1/4-2-20 Rule: For every quartering of decision cycle time, labor productivity doubles and costs fall by 20%. Moving from weekly to continuous planning applies this rule not once, but repeatedly, compounding the advantage with each compression.

George Stalk Jr., "Rules of Response" (BCG Perspectives, 1987)

Four Pillars of Autonomous Planning

Each capability reinforces the others, creating a self-reinforcing advantage that gets stronger with every decision.

AI Agents

11 specialized agents operate as a coordinated hive — biologically-inspired roles that communicate through a real-time signal system. From ATP allocation to purchase orders to manufacturing execution. Each decision is explainable and overrideable.

- A2A protocol, open agent interoperability

- <10ms inference latency

- Continuous learning from outcomes

- 24/7 operation, agents never sleep

Causal AI

The only rigorous way to know if a decision worked. Counterfactual reasoning compares what happened to what would have happened, separating decisions that caused good outcomes from decisions that got lucky.

- Counterfactual decision evaluation

- Causal override effectiveness

- Learn from skill, not luck

- Evidence-based guardrail calibration

Conformal Prediction

Every agent decision carries a distribution-free likelihood guarantee. Stochastic planning generates the calibration data; conformal prediction wraps it in mathematical coverage bounds that hold regardless of distribution.

- Distribution-free coverage guarantees

- Powered by stochastic simulation data

- Adaptive for non-stationary data

- Principled human escalation

Digital Twin

A complete simulation of your supply chain that generates the data everything else depends on. Monte Carlo simulation across 1,000+ scenarios produces training data for agents and calibration sets for conformal prediction.

- 20 distribution types

- Monte Carlo scenario generation

- Agent training data & counterfactual simulation

- Conformal prediction calibration sets

A Decision Intelligence Platform,

not just a Planning Tool

Autonomy implements Gartner's full DI lifecycle (model, orchestrate, monitor, govern) natively for supply chain.

Intent Compiled to Action

Strategy docs, OKRs, and executive directives compiled into the machine-actionable guardrails, KPI targets, and AIIO thresholds that govern every agent. The intent-engineering layer most platforms force you to write yourself.

Decisions as First-Class Assets

Every recurring decision (stocking, ordering, allocating) is a trackable digital asset with defined inputs, logic, ownership, and measured outcomes. Not an implicit output of a planning run.

Full Decision Lifecycle

Model, orchestrate, monitor, and govern decisions end-to-end. From decision modeling through agent execution to outcome tracking and continuous learning.

Measured Maturity Progression

Progress from Support to Augmentation to Automation, governed by measured decision quality, not arbitrary trust thresholds. Override effectiveness is measured and tracked statistically.

Full Explainability

Every AI decision comes with reasoning grounded in specific data: this order, this inventory level, this lead time. Ask Why at any point in the decision chain.

Guardrail Governance

Set business rules, max order value, min service level, cost ceiling. Agents decide within bounds automatically and escalate what exceeds them. Every override builds Experiential Knowledge.

Continuous Learning from Outcomes

Every decision generates a decision-outcome pair. Agents retrain continuously on these pairs, improving their judgment with each cycle. Overrides that improve outcomes get higher training weight. The system literally gets smarter every hour.

Demand Shaping + Supply Execution

Not just supply-side optimization. Agents actively shape demand through promotional planning, lifecycle management, and channel coordination alongside supply execution, true bi-directional orchestration.

Built for Enterprise Scale

Measurable Value, Not Promises

Every decision is evaluated in financial terms. Value isn't a projection, it's measured continuously from actual decision outcomes.

The executive dashboard is the landing page for leadership, savings trends, decision quality, and agent performance at a glance. No drilling required.

Grounded in Research & Industry Frameworks

Autonomy's architecture draws from Gartner's Decision Intelligence framework, peer-reviewed research, and proven decision science.

Gartner Decision Intelligence

DIP Framework (2026)

Autonomy implements Gartner's full DI lifecycle: decision modeling, orchestration, monitoring, and governance. 50% of SCM solutions will use intelligent agents by 2030.

Sequential Decision Framework

Decisions Under Uncertainty

A unified framework for decision-making under uncertainty. Four policy approaches (rules, optimization, learning, and simulation) structure our five-tier agent hierarchy.

Decision Science

From Information to Action

Decision Intelligence as the discipline of turning information into better actions. Analytics, statistics, and ML unified through decision quality, not just prediction accuracy.

Causal AI

Counterfactual Decision Evaluation

The only rigorous way to know if a decision worked. Counterfactual reasoning compares what happened to what would have happened, separating skill from luck in every agent decision.

Conformal Prediction

Distribution-Free Likelihood Guarantees

Every agent decision carries a calibrated likelihood score via conformal prediction, a distribution-free, model-agnostic framework. Coverage guarantees hold even when the model is wrong.

Stochastic Planning

Uncertainty as a First-Class Input

Monte Carlo simulation across 1,000+ scenarios generates training data for agents and calibration sets for conformal prediction. Plan with uncertainty, not despite it.

Ready to see Autonomy in action?

See how autonomous AI agents transform supply chain planning from reactive firefighting to proactive governance.